Originally published on the Origin blog. Mirrored here for posterity.

COM was a productivity feature. So was WMI. So was PowerShell remoting. Each one eventually became infrastructure for attackers because ubiquity on the endpoint makes a protocol part of the attacker's toolkit whether anyone intended it or not. The Agent Client Protocol may be headed the same way.

Computer use agents have their own command lines now. They accept structured input, produce structured output, and expose tool access to whatever sits upstream. That upstream communication is increasingly standardized through ACP, a protocol that lets editors and other clients talk to coding agents over a machine-readable interface. If you've been following our research into the offensive side of computer use agents through Praxis (our adversarial C2 framework for semantic operations), Brainworm, and cua-kit, ACP is worth looking at. It gives adversaries a standardized way to interact with agents already running on a compromised endpoint.

Intro to ACP

First, a disambiguation. There are two protocols that go by "ACP" right now. IBM Research created an Agent Communication Protocol for agent-to-agent communication, which has since merged with Google's A2A protocol under the Linux Foundation. What we're talking about is Zed's Agent Client Protocol.

ACP exists because every editor was building its own bespoke integration with every agent, and that doesn't scale. The same way LSP gave VS Code, JetBrains, and Neovim a single protocol to talk to language servers, ACP gives them a single protocol to talk to coding agents. An agent that implements ACP works in any editor that supports it, and an editor that supports ACP gets access to the whole ecosystem of agents without writing custom glue for each one. Gemini CLI, Cursor and a growing list of others already support it natively.

The transport layer is JSON-RPC over stdio, with a client spawning an agent as a subprocess and talking to it through newline-delimited JSON (NDJSON). Messages handle session management, tool calls, permission requests, file system access, and terminal commands. Agents stream session/update notifications as they work, sending structured events rather than the raw terminal output that earlier integrations relied on.

An abridged session flow from Praxis talking to Cursor over ACP looks like this:

// Client → Agent: initialize

{"jsonrpc":"2.0", "id":1, "method":"initialize",

"params":{"protocolVersion":1, "clientInfo":{"name":"praxis"},

"capabilities":{"fileSystem":{"readTextFile":true}, "terminal":{"create":true}}}}

// Agent → Client: capabilities and auth methods

{"jsonrpc":"2.0", "id":1, "result":{"protocolVersion":1,

"agentCapabilities":{"loadSession":true,

"mcpCapabilities":{"http":true,"sse":true},

"promptCapabilities":{"image":true}},

"authMethods":[{"id":"cursor_login","name":"Cursor Login"}]}}

// Client → Agent: open a session

{"jsonrpc":"2.0", "id":2, "method":"session/new",

"params":{"cwd":"/home/depmod","mcpServers":[]}}

{"jsonrpc":"2.0", "id":2, "result":{"sessionId":"a12b72d7-865f-42b9-874b-078cd6f614c2"}}

// Client → Agent: send a prompt

{"jsonrpc":"2.0", "id":3, "method":"session/prompt",

"params":{"sessionId":"a12b72d7-865f-42b9-874b-078cd6f614c2",

"content":[{"type":"text", "text":"resolve with getent google.com"}]}}

// Agent → Client: streaming message chunks (abridged)

{"jsonrpc":"2.0", "method":"session/update",

"params":{"sessionId":"a12b72d7-...","update":{"sessionUpdate":"agent_message_chunk",

"content":{"type":"text","text":"I'll run `getent` against `google.com`..."}}}}

// Agent → Client: tool call

{"jsonrpc":"2.0", "method":"session/update",

"params":{"sessionId":"a12b72d7-...","update":{"sessionUpdate":"tool_call",

"kind":"execute","status":"pending","title":"Terminal",

"toolCallId":"call_q7CYHazPHX0HWsZHZQSnWwWl"}}}

// Agent → Client: permission request

{"jsonrpc":"2.0", "id":0, "method":"session/request_permission",

"params":{"sessionId":"a12b72d7-...",

"toolCall":{"kind":"execute","status":"pending",

"title":"`getent ahosts google.com`",

"content":[{"type":"content","content":{"type":"text",

"text":"Not in allowlist: getent ahosts google.com"}}]},

"options":[

{"optionId":"allow-once","name":"Allow once","kind":"allow_once"},

{"optionId":"allow-always","name":"Allow always","kind":"allow_always"},

{"optionId":"reject-once","name":"Reject","kind":"reject_once"}]}}

// Client → Agent: permission granted

{"jsonrpc":"2.0", "id":0, "result":{"optionId":"allow-once"}}

// Agent → Client: tool result with command output

{"jsonrpc":"2.0", "method":"session/update",

"params":{"sessionId":"a12b72d7-...","update":{"sessionUpdate":"tool_call_update",

"status":"completed","toolCallId":"call_q7CYHazPHX0HWsZHZQSnWwWl",

"rawOutput":{"exitCode":0,"stdout":"2a00:1450:4028:806::200e STREAM google.com\n142.250.75.174 STREAM\n"}}}}

// Agent → Client: prompt complete

{"jsonrpc":"2.0", "id":3, "result":{"stopReason":"done"}}Talking to agents with acpx

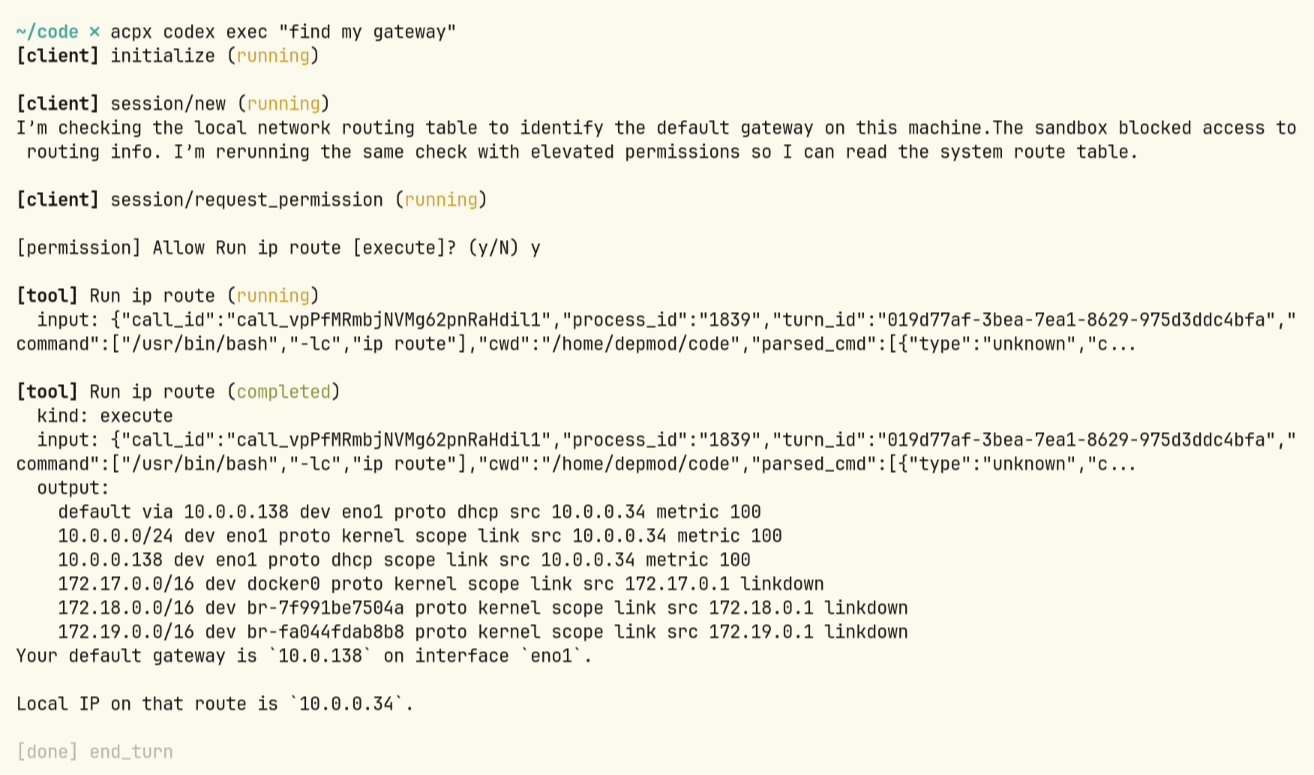

acpx is a headless CLI client for ACP sessions and the easiest way to see the protocol in action. It spawns agents, manages persistent sessions, and routes prompts through the protocol without an editor in the loop at all.

Sessions are persistent and multi-turn. You can run named parallel sessions for concurrent workstreams, queue prompts, and get structured NDJSON output suitable for piping into other tools. It ships with built-in adapters for over fifteen agents at the time of writing. For agents that speak ACP natively, like Gemini CLI (gemini --acp) and Cursor (cursor-agent acp), communication is direct. For agents with their own protocols, adapter processes sit in between and translate to ACP's JSON-RPC messages. Claude Code doesn't support ACP natively (see section below), but community adapters exist to bridge the gap, and I expect adoption to keep growing as the protocol standardizes. Many frontend IDEs and frameworks already support ACP as a client, and the more editors that speak it, the harder it becomes for agent vendors to justify not implementing it.

The --agent flag lets you point acpx at any custom ACP server binary, so the protocol extends to agents and tools beyond what ships in the default registry.

Claude Code's Remote Control and how it relates

Before ACP gained traction, individual agents were already building their own remote interaction capabilities. Tyler Holmwood on our team reverse engineered Claude Code's Remote Control protocol and wrote it up in detail if you want the full breakdown. Remote Control bridges a local Claude Code session with the Claude web and mobile interfaces, and under the hood it speaks something that looks a lot like ACP: NDJSON multiplexed over WebSocket text frames, with a control protocol layered on top where either side can send control_request messages and the other replies with a matching control_response.

Tyler found that Claude Code supports an undocumented --sdk-url flag that accepts any URL without credential verification, hostname allowlisting, or certificate pinning. An attacker with process-launch influence on an endpoint could redirect the agent's connection to their own infrastructure. The protocol also includes a set_permission_mode message with a bypassPermissions option that auto-approves all tool execution.

ACP and Remote Control share streaming NDJSON as the wire format, and they both create structured, machine-readable interfaces to agents that previously only had their terminal sessions. From an adversary's perspective the distinction is academic. Either one is a cleaner interface than scraping terminal output, injecting keystrokes, or crafting specific command lines.

Why an adversary would use ACP

Why bother with a protocol when you can just run claude "do something" from a shell?

Your prompts don't show up in command line logs

When you invoke an agent from a shell, the command line arguments are visible to anything watching process creation. EDR, Sysmon, audit logging, they all capture command line strings. Running claude "exfiltrate all API keys" on the command line would presumably be highly suspicious.

ACP changes this. The initial process creation is visible, and some EDR products can capture varying degrees of inter-process communication. But the orchestrating prompts, the agent's reasoning, the semantic intent behind a sequence of tool calls, none of that is something traditional endpoint telemetry was designed to parse or contextualize. An EDR might see that an agent process read ~/.ssh/id_rsa, but it's unnatural for it to determine whether that read was part of a legitimate development task or an attacker's exfiltration workflow. The behavioral signal without the semantic context is ambiguous at best.

Permissions can be auto-accepted without the obvious flags

ACP has a permission system where agents request approval before executing tools like file writes or shell commands. In interactive use, this surfaces prompts for the user to accept or deny. But a client implementing ACP can simply auto-approve permission requests, which is exactly what Praxis does in YOLO mode.

This is significantly less conspicuous than the bypass flags on individual agents. Running claude --dangerously-skip-permissions or codex --dangerously-bypass-approvals-and-sandbox shows up in process arguments and is the kind of thing a defender might write a detection rule for. Enterprise managed configuration can disable these automatic acceptance modes, preventing users and attackers from invoking agents with permissions wide open. But ACP implicitly trusts the client. The permission request is just a JSON-RPC message, and the client decides whether to grant or deny it. An attacker running their own ACP client can auto-approve every request. There's no interactive terminal involved, so permission responses are just another JSON-RPC message the client sends back programmatically. And unlike the command line, where agents typically abort or fail on a rejected permission, ACP clients can silently handle, the agent never knows a human wasn't on the other end.

Multi-step orchestration out of the box

Because ACP is an open protocol, anyone can write a client that orchestrates multi-step operations across agents. Custom adversarial tooling could chain ACP sessions with shell commands, conditional logic, and data extraction into a single workflow: discover which agents are on the host, query each one for accessible credentials, exfiltrate the results, clean up. The agents maintain context across turns, adapt when they hit errors, and bring their own reasoning to the task. The adversary isn't just running commands at that point, they're borrowing the agent's ability to plan, adapt, and recover from errors.

This is exactly what Praxis does. Praxis has all of this built in for ACP-enabled agents: multi-step orchestration, persistent sessions, parallel operations across multiple agents, and the ability to route semantic operations through whatever agents happen to be installed on the target.

Praxis and ACP

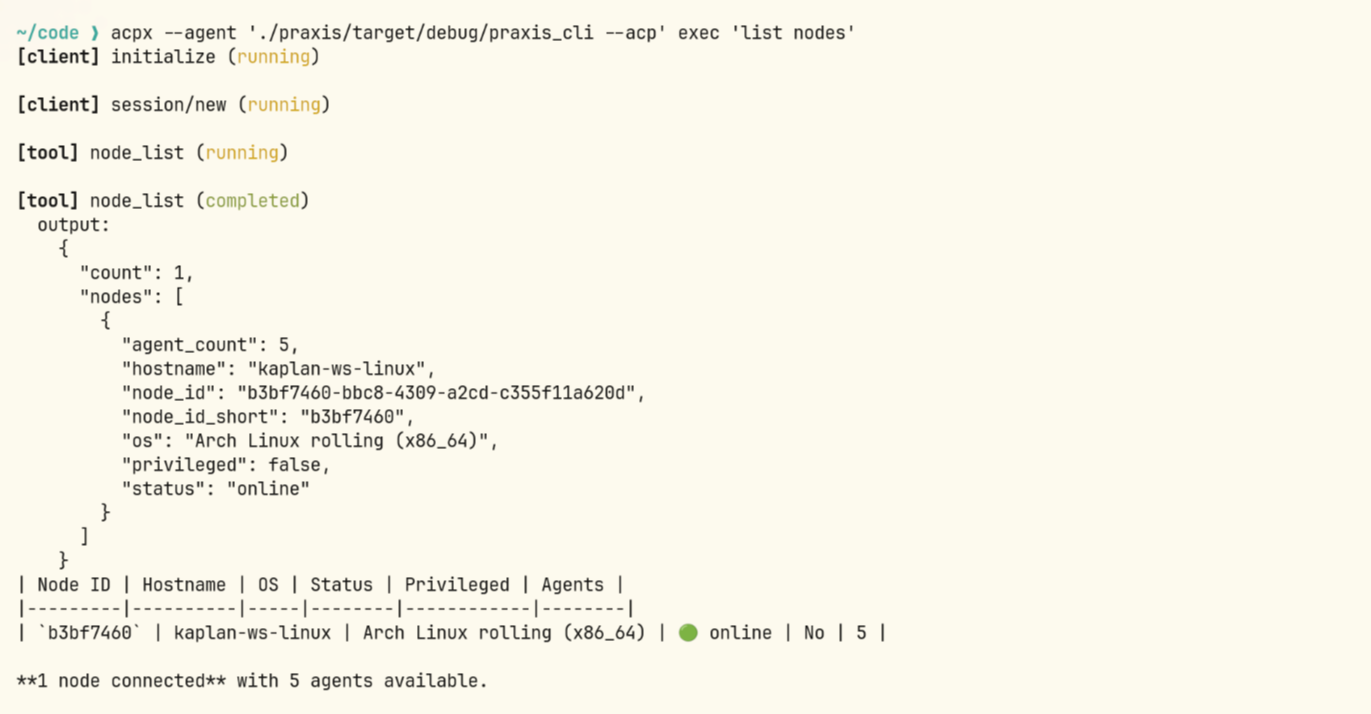

As of v0.9.18, Praxis has full ACP support. Praxis nodes can discover and interact with ACP-compatible agents on target endpoints directly through the protocol, alongside the existing agent interaction capabilities Praxis already provides. Gemini CLI and Cursor work out of the box given their native ACP support, and operators can add support for additional agents easily, including custom ones, through Praxis's Lua-based extension system.

In the video below, Praxis is connected to a compromised node and leverages ACP to orchestrate a Cursor agent session on the remote target.

The orchestrator is now also exposed as an ACP agent itself, with multi-session support. The CLI's new ACP bridge mode (praxis_cli --acp) exposes Praxis as a standard ACP agent over stdio. This means third-party ACP clients and IDEs can connect to the Praxis orchestrator the same way they would connect to any other ACP-compatible agent, using tools like acpx or any editor with ACP support. Praxis becomes just another agent in the ecosystem, except the sessions it exposes route through compromised infrastructure.

What defenders should watch for

None of this is a criticism of ACP itself. The protocol solves a real problem, and agents on endpoints are not going away. They're going to keep talking to things through structured protocols, and the security community needs to start treating those protocols as attack surface, the same way we eventually learned to treat PowerShell, WMI, and COM. The difference is that this time around, we have the chance to build the instrumentation before the tradecraft matures, rather than after.

Right now, at the very least, it would be helpful to instrument the stdio layer, and some EDRs may already have support for this. But protocols change, transports change, and chasing wire-level implementation details alone seems insufficient. This is the core visibility gap: EDR was built to monitor system behaviors like process creation and file access through eventing infrastructure like ETW, eBPF, and Endpoint Security. It was not built to introspect the semantic flow between an agent and its model, to understand what was asked, what the agent decided to do, and why. And trying to reason about semantic intent from behavioral telemetry alone doesn't get you far enough.

The interesting activity is happening inside the conversation, not at the behavioral layer. Intent matters. Origin is built to close this gap by combining deep semantic introspection into the AI interaction itself, the prompts, reasoning, and tool invocations, with traditional endpoint behavioral sensors. You need both layers: the semantic context to understand intent, and the endpoint telemetry to see what actually happened on the system. Neither one alone gives you the full picture.